I am working on a project, part of which is Android application. As using Android Studio is frustrating experience and I already failed starting some projects due to its “usefulness”, this time I decided to work the problem around as much as I can, since the project is quite important to me and amount of development to be done is rather significant. Usually I would use some low level language for that as it guarantees best performance, or Python for its ease of use. But I already have experience in building complex stuff in C/C++ and tried webapps in Python/Django, so I know it would be too much for a hobby project. At the same time I remember PHP as very nice tool for a guy who have little experience. So after preparing successful PoC for PHP side, I decided to bundle whole PHP inside of Android application. This allows to deliver environment with similar concepts as notorious Electron, but way less resource hungry. Let me present you PHP for Android development. Just to be clear I show here only binary side, no Android project configuration here, but if I manage to prepare a demo app for that, I will make it as part 2 of this post. Continue reading “PHP build for use bundled in Android applications”

Tag: Linux

Running graphical apps inside Docker containers

It happens from time to time that I want to use some application that I do not consider trustworthy. If the app is using only a console as its interface this is easy – create new disposable Docker container and that’s it. However for apps using Xorg this is not so easy. In such cases the quickest solution is to have either dedicated virtual machine, or separate PC exactly for this use case. However none of these 2 solutions is easy to use, nor is fast enough, especially for resource-hungry applications. To have smoothest experience, Docker still sounds like the best solution. Exactly for this purpose I created a template that should allow running any application closed in docker jail and even with possibility to cut it from internet access. Continue reading “Running graphical apps inside Docker containers”

Plugin architecture demo for Python projects

I started work on this concept many months ago. However I never had enough free time to get this to complete stage. Today finally I prepared the demo will all crucial features embedded. So, first, let me explain the concept. Generally the idea is to have a command that will be easily extensible with new features. The best way to achieve that is by having a kind of plugin architecture, where there is main program – in this case just an interface in fact as it does not provide any useful features on its own. And there are a lot of plugins that somehow registers to main program.

Crucial thing here is to have it done automatically, so all the user has to do is to install the plugin. Another important feature is to provide bash completion for this program, so that plugin developer can focus on interface itself, not the way of integrating it with bash and still get quite good completion. Of course in more complicated cases developer still has to do some development, but let’s ignore that here as this is not so important.

To achieve all the above I created two demo projects. One is called menu and it implements interface for plugins, do all the necessary jobs, so that plugins can live like almost independent applications, only called by menu. The other is demo plugin that uses all this magic to appear as command in menu just after its installation.

So from user perspective there are two main steps:

- Install menu and all plugins he needs with pip

- Enable bash completion as is usually done with argcomplete

Continue reading “Plugin architecture demo for Python projects”

Authorizing adb connections from Android command line (and making other service calls from cli)

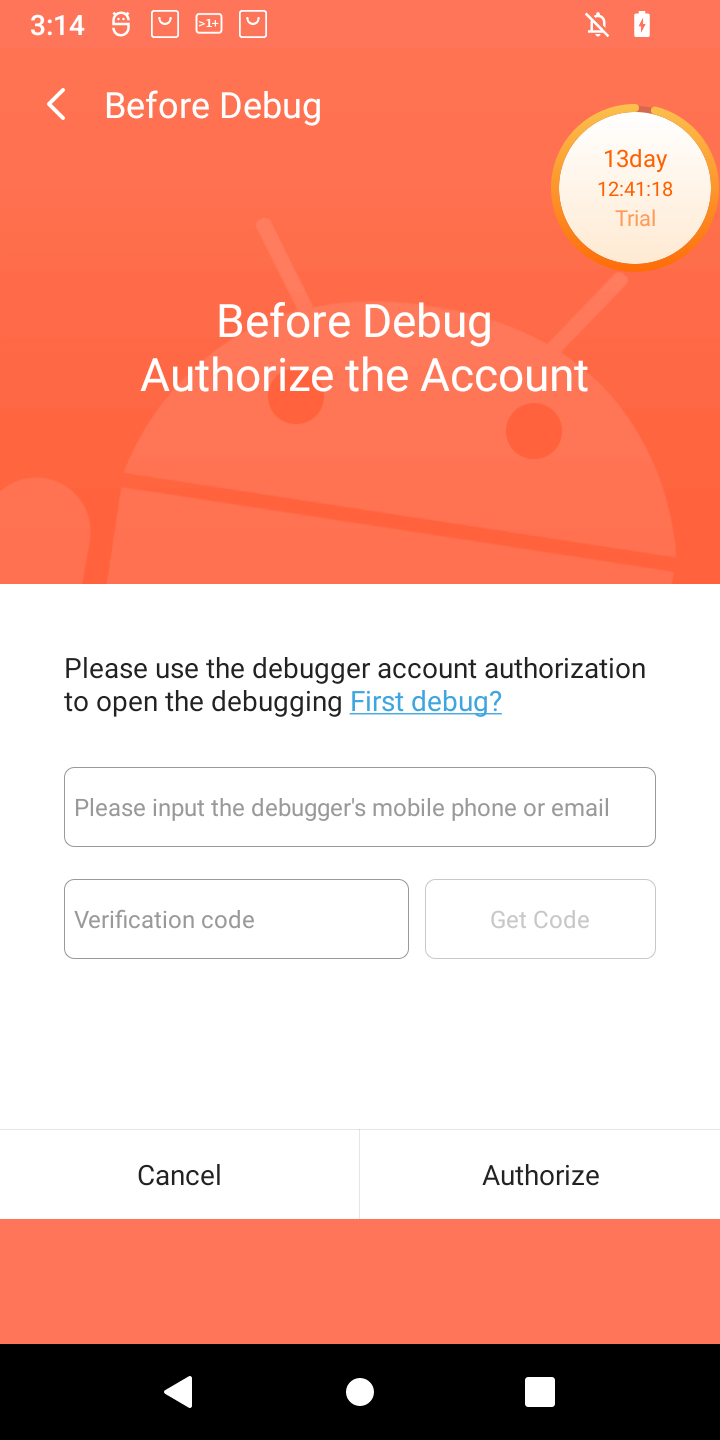

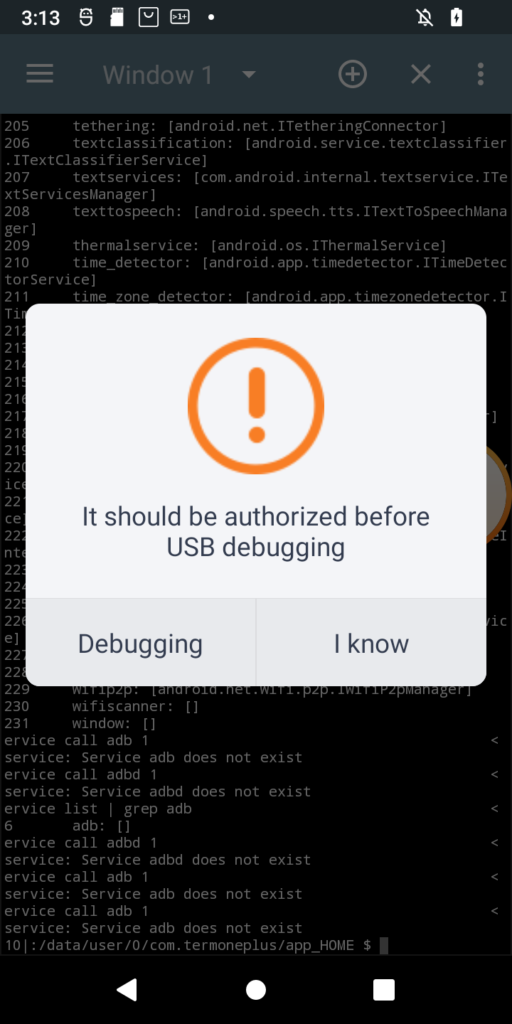

I just received POS tablet, precisely Sunmi V2s. I bought it for fraction of its prize due to alleged vendor lock. Due to that I was prepared for long fight for its freedom 😀 When starting the play it turned out that it has 14-day trial period, inside which it is possible to do almost anything in the system, just like with the ordinary Android device. Obviously one of the first things to do (especially due to this trial counting down from 14 days), was to enable adb. Developer options was enabled the usual way, but then after enabling adb and trying to connect from PC, instead of well known confirmation window with computer fingerprint, such a thing popped up on the screen:

Of course, as is usual for Chinese vendors, translation is a literal translation of Chinese glyphs into English words, so I had to click both buttons to see the difference 🙂 Anyway when Debugging is clicked, login page appears for Sunmi partner. While registration is open, the name suggest, it might not be successful for me. So I need another way. And it seems another way exists. In my case rooting the device was required at first, but for now I will ignore that part. What I want to show is how in general start digging in service calls from command line (I think I have another interesting use case on other device 🙂 ). So, without boring you all, even more, let’s get started. Continue reading “Authorizing adb connections from Android command line (and making other service calls from cli)”

New ccfactory on its way, binutils are already here

From the beginning of current year I am learning Docker. First result of this interest on my Github was publishing ccfactory tool, which was supposed to provide easy way to produce compiler toolchains. Almost like they were mass-produced in a factory, thus the name. However, since then I learned a lot and gained some experience. At the moment it is obvious to me, what I did then is not the best design. And because the project is still very fresh, I decided to start once again, from scratch, to create way better design that will be easy to develop and maintain.

Today is time to publish first step to this new design – binutils. I would not do that, but Docker Hub allows to have only one private repo, so the way that I do it disallows me to have it private anyway. So better idea is to describe it somehow to avoid confusion. As I wrote, this first step is binutils and this is simple container that contains binutils and nothing else. My goal is to finally make toolchain base on gcc version 3.3, which might sound weird, but this is what I needed in the past and is best way to prove what this new approach can achieve. With previous one, that I will call legacy from now on, I failed in that and before failing I did even more complicated Dockerfile, than originally planned. So, when finished this one will be proof of good design, I hope. Continue reading “New ccfactory on its way, binutils are already here”

Unboxing, startup and first impression of Nezha board marketed as first affordable RISCV SBC

Some of you may have already heard about new RISCV board that popped up in China recently. It is called Nezha and is the first available SBC having new Allwinner D1 SoC with RISCV core and capable of running Linux. Authors are marketing it as first affordable RISCV Linux SBC and there is a lot of truth in these claims. Maybe this board cannot compete in any way with boards based on ARM Cortex A cores. On the other hand all previous RISCV offerings were in different galaxy in terms of price tag. $999 for Hifive Unleashed during its Crowdsupply campaign vs. $99 for Nezha on Indiegogo. They even claimed to go down as low as to $12, but as they say global supply chain problems made it impossible for now. We have to wait for all this pandemic troubles to end to check, if these claims could be verified by facts. Continue reading “Unboxing, startup and first impression of Nezha board marketed as first affordable RISCV SBC”

Docker image with just cURL

Lately I play a bit with Docker containers. In a chain of problems that I have right now, I needed to have static cURL library on Debian. As it turned out linking cURL statically is not an easy task. Rather it causes a lot of problems, especially when trying this with packages available in Debian repos. After a long fight with these I decided to prepare my own distribution of cURL. But instead of creating usual deb package, I did it all on Docker and as a result, I have Docker image. In it I utilized possibilities of staged builds, where there can be few steps having in common only certain files. As a result I created base image, means the one created from scratch, where there are no other files, than the ones that we provide. So I provided only complete cURL install directory and musl libc to be able to run curl binary, as I did not want to tinker with cURL’s build system even more, than I did. Final image weights only ~1,5 MiB, so a result is really nice space saving compared to usual approach to Docker images. Inside, you can run curl binary separated from your operating system (to extent that Docker provides – remember, it is not virtualization!). Also it is possible to use libcurl to link your own binaries with it, this time completely statically!

As always, sources are on Github and this time there are also ready-to-use images on Docker Hub, so you can pull them directly, without need to build them. All instructions are on both pages and this is nothing unusual for any user of Docker, so I will not repeat myself here.

Creating one-file Linux distribution with docker

Few months ago I wrote a tutorial about creating Linux distribution consisting of just busybox as its userspace. In the meantime I worked a bit with docker and it sounded like nice next step in learning docker to automate the process of creating Linux distribution using it. As a result, today I present Linux distribution built with docker and based on my previous tutorial. I called it busy-linux due to it consisting of only busybox at the moment. My plan is to develop it further, most likely for private purposes only, so there might not be much happening in the project, but for sure I want to create dynamically linked variant in the near future, as this is what my use case requires. In the meantime feel free to try it yourself. Continue reading “Creating one-file Linux distribution with docker”

Busybox-based Linux distro from scratch

Today, I would like to show something different, than usual reverse-engineering, that appears on my blog usually. I needed to prepare a Linux distro for myself to be able to run it on my PC. But not the ordinary operating system that we download from webpage, then use fancy graphical installer to select, what we want and where. My goals were very specific. First was to have it custom-compiled. With that in mind there aren’t many choices left (maybe Gentoo?). Second was to not cross 16 MiB boundary. Why exactly that? That’s simple. I have old (15 years old to be precise) SD/MMC card made for Canon of exactly that size. Quick check showed me that this is possible. I tried buildroot and it failed to fulfill second requirement and I decided not to continue, despite the obvious optimizations on kernel modules, I could do. It’s simply too complex for such a simple task. If not buildroot, then let’s go and see how to do such thing from scratch!

The plan

Basically the plan is to have custom Linux distro compiled from scratch. It may sound like something incredibly complex and hard to do. But it’s not. There are just few problems one must learn on how to overcome. The most problematic constraint in my case is, obviously, 16 MiB limit. To not exceed it, I have to use busybox as my userspace. This by the way simplifies distro development significantly. Busybox works the way, that, if linked statically, requires only one, single binary to be able to work correctly. So, to sum up, on software side, we need Linux and busybox. You may wonder, how do I want to boot that system, then? Well. I said I need Linux 🙂 Maybe some people know, some does not, that Linux is itself a boot loader of some kind. At least, when using UEFI and this is what I want to use, it can be loaded directly by UEFI firmware. But that’s another thing to note – I will describe a way to prepare a distro for UEFI – it won’t be as simple as that, for legacy BIOS.

The whole plan will look as follows:

- Get compiler

- Compile Linux kernel

- Compile busybox (statically and stripped!)

- Prepare initramfs with whole userspace

- Format drive as EFI System Partition

- Combine kernel and initramfs into single binary

- Optionally sign the binary, in case we want Secure Boot to be enabled

- Add entry to embedded UEFI boot manager

In the meantime, I am going to show few ways to debug the system, in case of any problems. Continue reading “Busybox-based Linux distro from scratch”

Meet CC Factory – a factory for cross compilers

Having a tailored cross compiler is a problem I encountered couple of times in the past. Of course there are solutions to that problem like great crosstool-ng or more complex buildroot. In most cases crosstool-ng (ct-ng) can solve them. But whatever the tool we use, it has always its own drawbacks. For ct-ng these are small number of supported versions of toolchain components and huge dependence of environment, where it is started. The latter is even more problematic, because of the way continuing interrupted build work in ct-ng. Obviously if you want to build in example one compiler for ARM and one for MIPS, both consisting of latest tools, then it is not a problem.

But I have another use case for compiling toolchains. I do some reverse engineering from time to time. Nowadays many products have Linux under the hood and often there is no chance to get any SDK for them. But having ability to build something for the device can help a lot, either to run it there, or link with found tools and run in emulator. But I could also imagine that outside the reverse engineering field there might be a need to get toolchain in exact configuration, which is sadly not available via ct-ng or buildroot. Anyway, in any case where ct-ng or buildroot are not applicable, there is third way – docker. And this is the way I chose. This is how CC Factory appeared. It is docker container that builds gcc cross compiler on first startup and lands you in an container that have working compiler for the platform of your choice. And it does not require big effort to port it for the next architecture, or different tool version, unless the changes between the versions were really significant. Continue reading “Meet CC Factory – a factory for cross compilers”